- Browse

- Hadoop Mapreduce

Results for "hadoop mapreduce"

Status: Free TrialFree Trial

Status: Free TrialFree TrialSkills you'll gain: Apache Hadoop, Apache Spark, PySpark, Apache Hive, Big Data, IBM Cloud, Kubernetes, Docker (Software), Scalability, Data Processing, Distributed Computing, Performance Tuning, Data Transformation, Debugging

4.4·Rating, 4.4 out of 5 stars465 reviewsIntermediate · Course · 1 - 3 Months

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hadoop, Apache Hive, Big Data, Database Design, Extensible Markup Language (XML), Databases, JSON, Data Processing, Data Warehousing, Distributed Computing, Data Analysis, Scalability, Case Studies, Analytics, Data Pipelines, Extract, Transform, Load, Query Languages, Social Media, Data Cleansing, Data Integration

Intermediate · Specialization · 3 - 6 Months

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hive, NoSQL, Apache Hadoop, Extract, Transform, Load, Big Data, Data Warehousing, Data Pipelines, Data Infrastructure, Cloud Management, Databases, SQL, Performance Tuning, Data Processing, Real Time Data, Query Languages, Database Management, Data Transformation, Data Analysis Expressions (DAX), Scalability, Distributed Computing

Beginner · Specialization · 3 - 6 Months

Status: Free TrialFree TrialJ

Status: Free TrialFree TrialJJohns Hopkins University

Skills you'll gain: Apache Hadoop, Big Data, Apache Hive, Apache Spark, NoSQL, Data Infrastructure, File Systems, Data Processing, Data Management, Analytics, Data Science, SQL, Query Languages, Data Manipulation, Java, Data Structures, Distributed Computing, Scripting Languages, Data Transformation, Performance Tuning

4.4·Rating, 4.4 out of 5 stars7 reviewsIntermediate · Specialization · 3 - 6 Months

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hadoop, Apache Hive, Big Data, Data Analysis, Data Processing, Query Languages, Unstructured Data, Data Transformation, Scripting

Mixed · Course · 1 - 4 Weeks

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hive, Big Data, JSON, Case Studies, Apache Hadoop, People Analytics, Policty Analysis, Research, and Development, Analytics, Data Analysis, Social Sciences, Data-Driven Decision-Making, Data Processing, Business Analytics, Data Manipulation, Data Transformation, Query Languages

Mixed · Course · 1 - 4 Weeks

What brings you to Coursera today?

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: PySpark, Apache Spark, MySQL, Data Pipelines, Scala Programming, Extract, Transform, Load, Customer Analysis, Apache Hadoop, Classification And Regression Tree (CART), Predictive Modeling, Applied Machine Learning, Data Processing, Advanced Analytics, Big Data, Apache Maven, Statistical Machine Learning, Unsupervised Learning, SQL, Apache, Python Programming

4.6·Rating, 4.6 out of 5 stars38 reviewsBeginner · Specialization · 1 - 3 Months

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hadoop, Big Data, Data Infrastructure, Social Network Analysis, Data Processing, Program Development, Distributed Computing, Java, Text Mining, Graph Theory, File Systems, Debugging

Mixed · Course · 1 - 3 Months

U

UUniversity of Colorado Boulder

Skills you'll gain: Object Oriented Design, User Story, Systems Engineering, Real-Time Operating Systems, Unsupervised Learning, Field-Programmable Gate Array (FPGA), New Product Development, Sustainable Business, Delegation Skills, Sampling (Statistics), Supplier Management, Failure Analysis, Computer Vision, Accountability, Data Ethics, Data Mining, Sustainability Reporting, Statistical Modeling, Goal Setting, Database Design

Earn a degree

Degree · 1 - 4 Years

Status: NewNewStatus: Free TrialFree TrialP

Status: NewNewStatus: Free TrialFree TrialPPearson

Skills you'll gain: PySpark, Apache Hadoop, Apache Spark, Big Data, Apache Hive, Data Lakes, Analytics, Data Pipelines, Data Processing, Data Import/Export, Data Integration, Linux Commands, Data Mapping, Linux, File Systems, Text Mining, Data Management, Distributed Computing, Java, C++ (Programming Language)

Intermediate · Specialization · 1 - 4 Weeks

Status: Free TrialFree Trial

Status: Free TrialFree TrialSkills you'll gain: NoSQL, Apache Spark, Apache Hadoop, MongoDB, PySpark, Extract, Transform, Load, Apache Hive, Databases, Apache Cassandra, Big Data, Machine Learning, Applied Machine Learning, Generative AI, Machine Learning Algorithms, IBM Cloud, Kubernetes, Supervised Learning, Distributed Computing, Docker (Software), Database Management

4.5·Rating, 4.5 out of 5 stars810 reviewsBeginner · Specialization · 3 - 6 Months

Status: NewNewStatus: Free TrialFree Trial

Status: NewNewStatus: Free TrialFree TrialSkills you'll gain: Apache Hadoop, Apache Hive, Big Data, Data Warehousing, Data Integration, Data Processing, Enterprise Application Management, Performance Tuning, Data Manipulation, Data Analysis, Distributed Computing, Data Import/Export, SQL, Data Transformation, Query Languages

Mixed · Course · 1 - 3 Months

Searches related to hadoop mapreduce

In summary, here are 10 of our most popular hadoop mapreduce courses

- Introduction to Big Data with Spark and Hadoop: IBM

- Hadoop Big Data Analytics & Projects Mastery: EDUCBA

- Hadoop & Big Data Foundations Mastery Course: EDUCBA

- Big Data Processing Using Hadoop: Johns Hopkins University

- Hadoop Projects: Apply MapReduce, Pig & Hive: EDUCBA

- Big Data with Hadoop: Apply MapReduce, Pig & Hive: EDUCBA

- Spark and Python for Big Data with PySpark: EDUCBA

- MapReduce with Hadoop: Analyze, Design & Deploy: EDUCBA

- Master of Science in Data Science: University of Colorado Boulder

- Hadoop and Spark Fundamentals: Pearson

Frequently Asked Questions about Hadoop Mapreduce

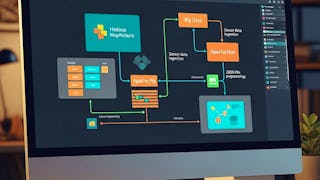

Hadoop MapReduce is a programming model and software framework used for processing and analyzing large datasets in a distributed computing environment. It is a key component of the Apache Hadoop ecosystem, which is widely used in big data processing. MapReduce allows users to write parallelizable algorithms that can quickly process large amounts of data by dividing it into smaller chunks and distributing the processing across a cluster of computers. The framework consists of two main phases: the Map phase, where data is divided into key-value pairs and processed in parallel, and the Reduce phase, where the results from the Map phase are aggregated and combined to produce the final output. Hadoop MapReduce is particularly useful for tasks like data mining, log processing, and creating search indexes, as it enables efficient processing of massive datasets that cannot be handled by a single machine.

To work with Hadoop MapReduce, you need to learn several skills:

Programming Languages: Familiarize yourself with Java, as it is the primary language used for writing MapReduce programs. Additionally, understanding Python can also be beneficial.

Hadoop Basics: Gain a solid understanding of Hadoop's underlying architecture, concepts, and components such as HDFS (Hadoop Distributed File System) and YARN (Yet Another Resource Negotiator).

MapReduce Concepts: Learn the MapReduce programming model and its basic principles for distributed processing of large data sets.

Data Manipulation: Acquire skills in data manipulation using techniques like filtering, aggregation, sorting, and joining datasets, as these are fundamental operations performed in MapReduce jobs.

Distributed Systems: Familiarize yourself with the fundamentals of distributed systems, including concepts like scalability, fault tolerance, and parallel processing.

Apache Hadoop Ecosystem: Explore the various tools and technologies in the Hadoop ecosystem, such as Apache Hive, Apache Pig, and Apache Spark, which enhance data processing capabilities and provide higher-level abstractions.

Analytical Skills: Develop analytical thinking and problem-solving abilities to identify suitable MapReduce algorithms and optimize their performance based on the requirements.

Debugging and Troubleshooting: Learn how to debug and troubleshoot common errors or performance bottlenecks in MapReduce jobs.

Performance Optimization: Understand techniques for improving the performance of MapReduce jobs, such as data compression, proper cluster configuration, data partitioning, and task tuning.

- Familiarity with Hadoop Tools: Gain hands-on experience with tools like Apache Hadoop, Apache Ambari, Cloudera, Hortonworks, or MapR, which are commonly used for managing Hadoop clusters and monitoring job execution.

Remember, continuous learning and staying updated with the latest advancements in Hadoop and Big Data technologies will ensure your proficiency and success in working with Hadoop MapReduce.

With Hadoop MapReduce skills, you can explore various job opportunities in the field of Big Data and Hadoop ecosystem. Some potential jobs that require Hadoop MapReduce skills include:

Big Data Engineer: As a Big Data Engineer, you would be responsible for designing, building, and maintaining large-scale data processing systems using Hadoop MapReduce. Your role would involve developing data pipelines, optimizing data workflows, and ensuring the efficient processing of big data.

Big Data Analyst: With Hadoop MapReduce skills, you can work as a Big Data Analyst, where your primary focus would be on analyzing large datasets using Hadoop MapReduce. You would extract relevant insights, discover patterns, and provide actionable recommendations to stakeholders based on the analysis.

Data Scientist: Hadoop MapReduce skills are valuable for Data Scientists as well. With these skills, you can effectively handle and process massive datasets used for training machine learning models. You would leverage Hadoop MapReduce to preprocess, clean, and transform data, making it suitable for advanced analytics and predictive modeling.

Hadoop Developer: As a Hadoop Developer, you would specialize in developing and maintaining Hadoop-based applications, including MapReduce jobs. Your responsibilities would involve writing efficient MapReduce code, troubleshooting performance issues, and ensuring seamless integration with the Hadoop ecosystem.

Data Engineer: Hadoop MapReduce skills are highly beneficial for Data Engineers tasked with building scalable and distributed data processing systems. You would design and implement data pipelines using Hadoop MapReduce, ensuring reliable data ingestion, transformation, and storage.

Hadoop Administrator: With expertise in MapReduce, you can work as a Hadoop Administrator responsible for managing and optimizing Hadoop clusters. Your role would involve configuring and tuning MapReduce jobs, monitoring cluster performance, and troubleshooting issues to ensure smooth functioning.

- Technology Consultant: As a Technology Consultant, you can provide guidance and expertise on incorporating Hadoop MapReduce into businesses' existing infrastructure. You would help organizations leverage these skills to solve complex data processing challenges and maximize the potential of their data.

Remember, the demand for Hadoop MapReduce skills can vary between industries and job markets. Continuously keeping up with new developments and expanding your knowledge of related technologies like Apache Spark and Hadoop ecosystem components can enhance your job prospects even further.

People who are interested in data analysis, data processing, and have a strong background in programming are best suited for studying Hadoop MapReduce. Additionally, individuals who have experience with distributed systems and are comfortable working with large datasets will find studying Hadoop MapReduce beneficial.

There are several topics related to Hadoop MapReduce that you can study. Some of them include:

Big Data: Since Hadoop MapReduce is a framework for processing large volumes of data, studying big data concepts would be beneficial. This includes understanding data storage, data processing, and data analysis techniques.

Distributed Computing: MapReduce is designed to distribute the processing of data across multiple nodes in a cluster. Studying distributed computing will help you understand the underlying principles and algorithms used in MapReduce.

Hadoop Ecosystem: Hadoop MapReduce is just one component of the larger Hadoop ecosystem. Learning about other components like Hadoop Distributed File System (HDFS), YARN, Hive, Pig, and HBase will provide a holistic understanding of big data processing with Hadoop.

Java Programming: MapReduce programs are typically written in Java, so having a good grasp of Java programming concepts is essential. You can study Java to learn about object-oriented programming, data structures, and algorithms.

Parallel and Concurrent Programming: MapReduce processes data in parallel across multiple nodes, making it crucial to understand parallel and concurrent programming concepts. Studying topics like multithreading, concurrency control, and synchronization will help you write efficient and scalable MapReduce programs.

Data Analytics and Machine Learning: MapReduce can be used for data analysis and machine learning tasks. Studying data analytics techniques, statistical analysis, and machine learning algorithms will enable you to utilize MapReduce for these purposes effectively.

- Cloud Computing: With the advent of cloud platforms like Amazon Web Services (AWS) and Google Cloud Platform (GCP), Hadoop is often deployed in the cloud. Understanding cloud computing concepts, virtualization, and cloud-based data processing will be valuable when working with Hadoop MapReduce in a cloud environment.

Remember, Hadoop MapReduce is a powerful tool, but it's important to have a strong foundation in the underlying concepts and technologies to use it effectively.

Online Hadoop MapReduce courses offer a convenient and flexible way to enhance your knowledge or learn new Hadoop MapReduce is a programming model and software framework used for processing and analyzing large datasets in a distributed computing environment. It is a key component of the Apache Hadoop ecosystem, which is widely used in big data processing. MapReduce allows users to write parallelizable algorithms that can quickly process large amounts of data by dividing it into smaller chunks and distributing the processing across a cluster of computers. The framework consists of two main phases: the Map phase, where data is divided into key-value pairs and processed in parallel, and the Reduce phase, where the results from the Map phase are aggregated and combined to produce the final output. Hadoop MapReduce is particularly useful for tasks like data mining, log processing, and creating search indexes, as it enables efficient processing of massive datasets that cannot be handled by a single machine. skills. Choose from a wide range of Hadoop MapReduce courses offered by top universities and industry leaders tailored to various skill levels.

When looking to enhance your workforce's skills in Hadoop MapReduce, it's crucial to select a course that aligns with their current abilities and learning objectives. Our Skills Dashboard is an invaluable tool for identifying skill gaps and choosing the most appropriate course for effective upskilling. For a comprehensive understanding of how our courses can benefit your employees, explore the enterprise solutions we offer. Discover more about our tailored programs at Coursera for Business here.