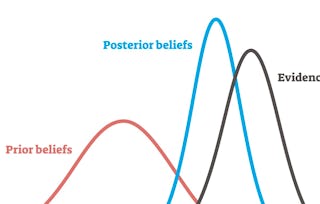

This course describes Bayesian statistics, in which one's inferences about parameters or hypotheses are updated as evidence accumulates. You will learn to use Bayes’ rule to transform prior probabilities into posterior probabilities, and be introduced to the underlying theory and perspective of the Bayesian paradigm. The course will apply Bayesian methods to several practical problems, to show end-to-end Bayesian analyses that move from framing the question to building models to eliciting prior probabilities to implementing in R (free statistical software) the final posterior distribution. Additionally, the course will introduce credible regions, Bayesian comparisons of means and proportions, Bayesian regression and inference using multiple models, and discussion of Bayesian prediction.

Bayesian Statistics

(798 reviews)

Skills you'll gain

Details to know

Add to your LinkedIn profile

12 assignments

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 7 modules in this course

This short module introduces basics about Coursera specializations and courses in general, this specialization: Statistics with R, and this course: Bayesian Statistics. Please take several minutes read this information. Thanks for joining us in this course!

What's included

1 video5 readings1 discussion prompt

<p>Welcome! Over the next several weeks, we will together explore Bayesian statistics. <p>In this module, we will work with conditional probabilities, which is the probability of event B given event A. Conditional probabilities are very important in medical decisions. By the end of the week, you will be able to solve problems using Bayes' rule, and update prior probabilities.</p><p>Please use the learning objectives and practice quiz to help you learn about Bayes' Rule, and apply what you have learned in the lab and on the quiz.

What's included

9 videos4 readings3 assignments

In this week, we will discuss the continuous version of Bayes' rule and show you how to use it in a conjugate family, and discuss credible intervals. By the end of this week, you will be able to understand and define the concepts of prior, likelihood, and posterior probability and identify how they relate to one another.

What's included

10 videos3 readings3 assignments

In this module, we will discuss Bayesian decision making, hypothesis testing, and Bayesian testing. By the end of this week, you will be able to make optimal decisions based on Bayesian statistics and compare multiple hypotheses using Bayes Factors.

What's included

14 videos3 readings3 assignments

This week, we will look at Bayesian linear regressions and model averaging, which allows you to make inferences and predictions using several models. By the end of this week, you will be able to implement Bayesian model averaging, interpret Bayesian multiple linear regression and understand its relationship to the frequentist linear regression approach.

What's included

11 videos3 readings3 assignments

This week consists of interviews with statisticians on how they use Bayesian statistics in their work, as well as the final project in the course.

What's included

3 videos1 reading

In this module you will use the data set provided to complete and report on a data analysis question. Please read the background information, review the report template (downloaded from the link in Lesson Project Information), and then complete the peer review assignment.

What's included

2 readings1 peer review

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructors

Offered by

Explore more from Data Analysis

Status: Free Trial

Status: Free TrialUniversity of California, Santa Cruz

Status: Free Trial

Status: Free TrialUniversity of California, Santa Cruz

Status: Free Trial

Status: Free TrialArizona State University

Status: Free Trial

Status: Free TrialIllinois Tech

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

45.23%

- 4 stars

20.42%

- 3 stars

14.53%

- 2 stars

9.27%

- 1 star

10.52%

Showing 3 of 798

Reviewed on Aug 24, 2017

An interesting and challenging course, would be better with more real examples and explanation as some of the material felt rushed

Reviewed on Jun 2, 2017

Learnt a lot. Though the subject material was hard to grasp first hand, it is good that instructor was readily available to help us through.

Reviewed on Jun 1, 2019

The course could have been more comprehensive and less verbose. It had so much content in a tiny course. Content should be less and more comprehensive.

Open new doors with Coursera Plus

Unlimited access to 10,000+ world-class courses, hands-on projects, and job-ready certificate programs - all included in your subscription

Advance your career with an online degree

Earn a degree from world-class universities - 100% online

Join over 3,400 global companies that choose Coursera for Business

Upskill your employees to excel in the digital economy

Frequently asked questions

We assume you have knowledge equivalent to the prior courses in this specialization.

No. Completion of a Coursera course does not earn you academic credit from Duke; therefore, Duke is not able to provide you with a university transcript. However, your electronic Certificate will be added to your Accomplishments page - from there, you can print your Certificate or add it to your LinkedIn profile.

To access the course materials, assignments and to earn a Certificate, you will need to purchase the Certificate experience when you enroll in a course. You can try a Free Trial instead, or apply for Financial Aid. The course may offer 'Full Course, No Certificate' instead. This option lets you see all course materials, submit required assessments, and get a final grade. This also means that you will not be able to purchase a Certificate experience.

More questions

Financial aid available,