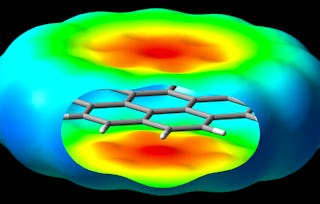

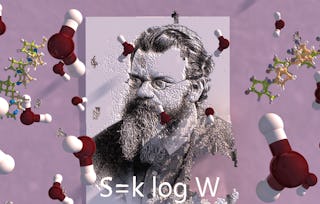

This introductory physical chemistry course examines the connections between molecular properties and the behavior of macroscopic chemical systems.

Statistical Molecular Thermodynamics

Ends soon! Save on skills that make you shine with 40% off 3 months of Coursera Plus. Save now

Statistical Molecular Thermodynamics

Instructor: Dr. Christopher J. Cramer

Top Instructor

26,441 already enrolled

Included with

368 reviews

Details to know

Add to your LinkedIn profile

9 assignments

See how employees at top companies are mastering in-demand skills

There are 9 modules in this course

Instructor

Offered by

Explore more from Chemistry

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Status: Preview

Status: PreviewUniversity of Manchester

Status: Preview

Status: PreviewCarnegie Mellon University

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

91.30%

- 4 stars

7.60%

- 3 stars

0.81%

- 2 stars

0.27%

- 1 star

0%

Showing 3 of 368

Reviewed on Nov 5, 2017

A beautiful well taught course. The lecturers were not boring and the teaching was very lively. It opened my mind to the importance of thermodynamics in many real world applications.

Reviewed on Sep 24, 2017

I loved this course. Very well explained, difficult topics made easy and lovable demostrations. Absolutely recommended.

Reviewed on Mar 2, 2022

Theoretically sound concepts were well linked to relevant practicability. It has demanded a lot of dedication, but it has definitely been a worthwhile endeavour.