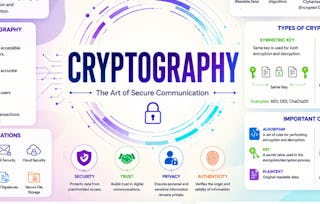

Welcome to Course 2 of Introduction to Applied Cryptography. In this course, you will be introduced to basic mathematical principles and functions that form the foundation for cryptographic and cryptanalysis methods. These principles and functions will be helpful in understanding symmetric and asymmetric cryptographic methods examined in Course 3 and Course 4. These topics should prove especially useful to you if you are new to cybersecurity. It is recommended that you have a basic knowledge of computer science and basic math skills such as algebra and probability.

Mathematical Foundations for Cryptography

Save on skills that make you shine with 40% off 3 months of Coursera Plus. Save now

Mathematical Foundations for Cryptography

This course is part of Introduction to Applied Cryptography Specialization

Instructors: William Bahn

21,671 already enrolled

Included with

334 reviews

Details to know

Add to your LinkedIn profile

9 assignments

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 4 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructors

Offered by

Explore more from Computer Security and Networks

Status: Preview

Status: PreviewUniversity of Leeds

Status: Preview

Status: PreviewBirla Institute of Technology & Science, Pilani

Status: Preview

Status: PreviewBirla Institute of Technology & Science, Pilani

Status: Preview

Status: PreviewUniversity of London

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

74.55%

- 4 stars

18.26%

- 3 stars

4.79%

- 2 stars

1.19%

- 1 star

1.19%

Showing 3 of 334

Reviewed on May 31, 2020

Though a little difficult to understand, it is a great course for math lovers out there.

Reviewed on Jul 28, 2020

The course content and the assignments were quite meticulously designed and delivered efficiently.

Reviewed on Feb 19, 2021

Very interesting course which is starting to be challenging to the occasional student and throws the basis for real comprehnsion of facts always accepet as true.