The movement of bodies in space (like spacecraft, satellites, and space stations) must be predicted and controlled with precision in order to ensure safety and efficacy. Kinematics is a field that develops descriptions and predictions of the motion of these bodies in 3D space. This course in Kinematics covers four major topic areas: an introduction to particle kinematics, a deep dive into rigid body kinematics in two parts (starting with classic descriptions of motion using the directional cosine matrix and Euler angles, and concluding with a review of modern descriptors like quaternions and Classical and Modified Rodrigues parameters). The course ends with a look at static attitude determination, using modern algorithms to predict and execute relative orientations of bodies in space.

Kinematics: Describing the Motions of Spacecraft

Ends soon! Save on skills that make you shine with 40% off 3 months of Coursera Plus. Save now

Kinematics: Describing the Motions of Spacecraft

This course is part of Spacecraft Dynamics and Control Specialization

Instructor: Hanspeter Schaub

27,821 already enrolled

Included with

341 reviews

What you'll learn

Differentiate a vector as seen by another rotating frame and derive frame dependent velocity and acceleration vectors

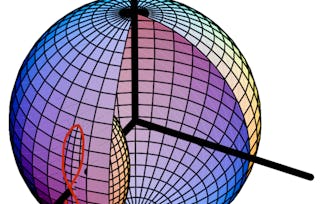

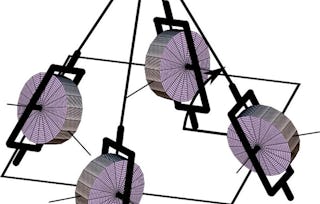

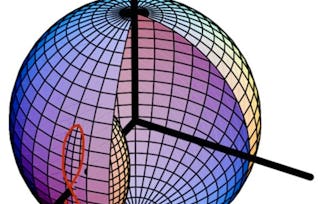

Apply the Transport Theorem to solve kinematic particle problems and translate between various sets of attitude descriptions

Add and subtract relative attitude descriptions and integrate those descriptions numerically to predict orientations over time

Derive the fundamental attitude coordinate properties of rigid bodies and determine attitude from a series of heading measurements

Details to know

Add to your LinkedIn profile

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 4 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructor

Offered by

Explore more from Physics and Astronomy

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Status: Free Trial

Status: Free TrialUniversity of Colorado Boulder

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

90.90%

- 4 stars

6.74%

- 3 stars

0.87%

- 2 stars

0.87%

- 1 star

0.58%

Showing 3 of 341

Reviewed on Oct 18, 2017

Brilliant classes! Absolutely brilliant, enjoyed every bit of it. All you need is that you should love Physics and Maths to attend these classes. If you do, it is an enriching experience for you.

Reviewed on Feb 21, 2020

if you want to learn about spacecraft attitude and would like to get experience in describing the motion of spacecraft as well as dynamics of satellites.This course will be first step.

Reviewed on Aug 5, 2020

I couldn't be more happy with this course I enjoyed every second of it... and yeah... also the assignments

¹ Some assignments in this course are AI-graded. For these assignments, your data will be used in accordance with Coursera's Privacy Notice.