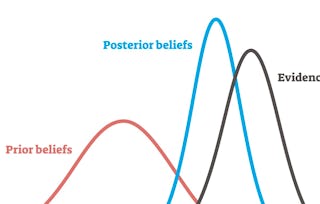

This course describes Bayesian statistics, in which one's inferences about parameters or hypotheses are updated as evidence accumulates. You will learn to use Bayes’ rule to transform prior probabilities into posterior probabilities, and be introduced to the underlying theory and perspective of the Bayesian paradigm. The course will apply Bayesian methods to several practical problems, to show end-to-end Bayesian analyses that move from framing the question to building models to eliciting prior probabilities to implementing in R (free statistical software) the final posterior distribution. Additionally, the course will introduce credible regions, Bayesian comparisons of means and proportions, Bayesian regression and inference using multiple models, and discussion of Bayesian prediction.

Bayesian Statistics

Ends soon! Save on skills that make you shine with 40% off 3 months of Coursera Plus. Save now

798 reviews

Skills you'll gain

- Model Evaluation

- Statistical Hypothesis Testing

- Data-Driven Decision-Making

- Predictive Modeling

- Bayesian Statistics

- Probability & Statistics

- Statistical Analysis

- Statistical Inference

- Probability

- Statistical Programming

- Statistical Methods

- Regression Analysis

- Probability Distribution

- Statistical Modeling

- Data Analysis

Tools you'll learn

Details to know

Add to your LinkedIn profile

12 assignments

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 7 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructors

Offered by

Explore more from Data Analysis

Status: Free Trial

Status: Free TrialUniversity of California, Santa Cruz

Status: Free Trial

Status: Free TrialUniversity of California, Santa Cruz

Status: Free Trial

Status: Free TrialArizona State University

Status: Free Trial

Status: Free TrialIllinois Tech

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

45.23%

- 4 stars

20.42%

- 3 stars

14.53%

- 2 stars

9.27%

- 1 star

10.52%

Showing 3 of 798

Reviewed on Aug 24, 2017

An interesting and challenging course, would be better with more real examples and explanation as some of the material felt rushed

Reviewed on Jan 2, 2017

Theis course is substantially more difficult than the three first ones, and the material is scarce. However, I must admit that this is one of the courses I have ever learnt the most

Reviewed on Jun 2, 2017

Learnt a lot. Though the subject material was hard to grasp first hand, it is good that instructor was readily available to help us through.