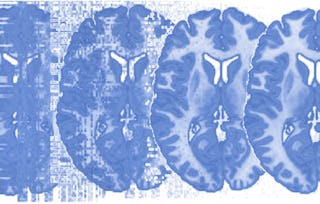

Functional Magnetic Resonance Imaging (fMRI) is the most widely used technique for investigating the living, functioning human brain as people perform tasks and experience mental states. It is a convergence point for multidisciplinary work from many disciplines. Psychologists, statisticians, physicists, computer scientists, neuroscientists, medical researchers, behavioral scientists, engineers, public health researchers, biologists, and others are coming together to advance our understanding of the human mind and brain. This course covers the analysis of Functional Magnetic Resonance Imaging (fMRI) data. It is a continuation of the course “Principles of fMRI, Part 1”.

Principles of fMRI 2

Principles of fMRI 2

This course is part of Neuroscience and Neuroimaging Specialization

Instructors: Martin Lindquist, PhD, MSc

18,577 already enrolled

Included with

249 reviews

Skills you'll gain

- Image Analysis

- Model Evaluation

- Statistical Modeling

- Statistical Inference

- Statistical Methods

- Statistical Analysis

- Correlation Analysis

- Network Analysis

- Regression Analysis

- Psychology

- Advanced Analytics

- Time Series Analysis and Forecasting

- Magnetic Resonance Imaging

- Neurology

- Data Analysis

- Medical Imaging

- Dimensionality Reduction

Details to know

Add to your LinkedIn profile

4 assignments

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 4 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructors

Offered by

Explore more from Data Analysis

Johns Hopkins University

Johns Hopkins University

Johns Hopkins University

Korea Advanced Institute of Science and Technology(KAIST)

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

77.91%

- 4 stars

17.26%

- 3 stars

4.01%

- 2 stars

0.40%

- 1 star

0.40%

Showing 3 of 249

Reviewed on Jul 29, 2019

Excellent, thorough explanation of the computations and theory underlying fMRI analysis. I particularly enjoyed the emphasis on MVPA. Thanks!

Reviewed on May 23, 2019

Very good course. Best week is the last week. Its does a good overview of fMRI Statistical analysis and design of experiments.

Reviewed on Nov 16, 2016

The course was ery good, I wish it also included working with sample fmri data.

Advance your career with an online degree

Earn a degree from world-class universities - 100% online