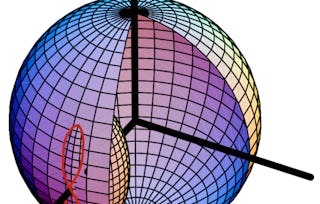

This course trains you in the skills needed to program specific orientation and achieve precise aiming goals for spacecraft moving through three dimensional space. First, we cover stability definitions of nonlinear dynamical systems, covering the difference between local and global stability. We then analyze and apply Lyapunov's Direct Method to prove these stability properties, and develop a nonlinear 3-axis attitude pointing control law using Lyapunov theory. Finally, we look at alternate feedback control laws and closed loop dynamics.

Control of Nonlinear Spacecraft Attitude Motion

Control of Nonlinear Spacecraft Attitude Motion

This course is part of Spacecraft Dynamics and Control Specialization

Instructor: Hanspeter Schaub

10,112 already enrolled

Included with

70 reviews

What you'll learn

Differentiate between a range of nonlinear stability concepts

Apply Lyapunov’s direct method to argue stability and convergence on a range of dynamical systems

Develop rate and attitude error measures for a 3-axis attitude control using Lyapunov theory

Analyze rigid body control convergence with unmodeled torque

Details to know

Add to your LinkedIn profile

See how employees at top companies are mastering in-demand skills

Build your subject-matter expertise

- Learn new concepts from industry experts

- Gain a foundational understanding of a subject or tool

- Develop job-relevant skills with hands-on projects

- Earn a shareable career certificate

There are 4 modules in this course

Earn a career certificate

Add this credential to your LinkedIn profile, resume, or CV. Share it on social media and in your performance review.

Instructor

Offered by

Explore more from Physics and Astronomy

University of Colorado Boulder

University of Colorado Boulder

University of Colorado Boulder

University of Colorado Boulder

Why people choose Coursera for their career

Felipe M.

Jennifer J.

Larry W.

Chaitanya A.

Learner reviews

- 5 stars

82.85%

- 4 stars

8.57%

- 3 stars

4.28%

- 2 stars

4.28%

- 1 star

0%

Showing 3 of 70

Reviewed on Sep 26, 2020

Course is amazing and well detailed with different live perspectives.

Reviewed on May 29, 2019

Excellent course! Enjoyed it a lot. Learnt a lot as well. Thank you.

Reviewed on Sep 18, 2020

Excellent course but it could have been smoother if the instructor kept himself in loop with people doing the course

Advance your career with an online degree

Earn a degree from world-class universities - 100% online

¹ Some assignments in this course are AI-graded. For these assignments, your data will be used in accordance with Coursera's Privacy Notice.