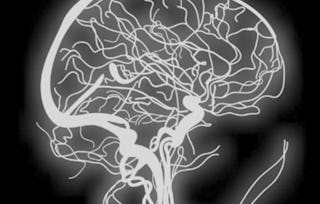

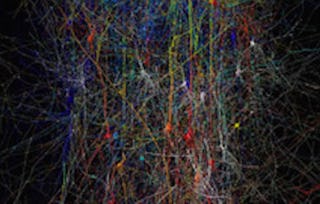

This course provides an introduction to basic computational methods for understanding what nervous systems do and for determining how they function. We will explore the computational principles governing various aspects of vision, sensory-motor control, learning, and memory. Specific topics that will be covered include representation of information by spiking neurons, processing of information in neural networks, and algorithms for adaptation and learning. We will make use of Matlab/Octave/Python demonstrations and exercises to gain a deeper understanding of concepts and methods introduced in the course. The course is primarily aimed at third- or fourth-year undergraduates and beginning graduate students, as well as professionals and distance learners interested in learning how the brain processes information.

Computational Neuroscience

Gain insight into a topic and learn the fundamentals.

1,142 reviews

Beginner level

No prior experience required

Flexible schedule

2 weeks at 10 hours a week

Learn at your own pace

95%

Most learners liked this course

Skills you'll gain

- Artificial Neural Networks

- Biology

- Physiology

- Mathematical Modeling

- Applied Machine Learning

- Electrophysiology

- Machine Learning Methods

- Machine Learning Algorithms

- Recurrent Neural Networks (RNNs)

- Supervised Learning

- Sensory Systems Analysis

- Probability Distribution

- Differential Equations

- Computer Vision

- Reinforcement Learning

- Network Model

- Neurology

Tools you'll learn

Details to know

Shareable certificate

Add to your LinkedIn profile

Assessments

9 assignments

Taught in English

91%

of learners achieved a positive career outcome

See how employees at top companies are mastering in-demand skills

There are 8 modules in this course

Instructors

Instructor ratings

(217 ratings)

Offered by

Explore more from Machine Learning

Johns Hopkins University

Johns Hopkins University

Hebrew University of Jerusalem

University of Colorado Boulder

Why people choose Coursera for their career

Felipe M.

Learner since 2018

"To be able to take courses at my own pace and rhythm has been an amazing experience. I can learn whenever it fits my schedule and mood."

Jennifer J.

Learner since 2020

"I directly applied the concepts and skills I learned from my courses to an exciting new project at work."

Larry W.

Learner since 2021

"When I need courses on topics that my university doesn't offer, Coursera is one of the best places to go."

Chaitanya A.

"Learning isn't just about being better at your job: it's so much more than that. Coursera allows me to learn without limits."

Learner reviews

- 5 stars

70.57%

- 4 stars

22.50%

- 3 stars

4.37%

- 2 stars

1.75%

- 1 star

0.78%

Showing 3 of 1142

SA

Reviewed on Sep 11, 2022

Its an amazing course. You will love the way they teach. I'm so glad to get guidance under Prof . Rajesh through this course. One word "Its great".

MA

Reviewed on Jul 12, 2017

A good look at mathematical models focusing mainly at the synapse and neuron level. The math came a little fast and furious for my 30+ years antique math training.

HS

Reviewed on May 17, 2020

Excellent course! The field of comp neuro was brough to life by the instructors! The exercises really helped in understanding the content.

Advance your career with an online degree

Earn a degree from world-class universities - 100% online